AI News Daily

Midjourney V7 Makes a Stunning Debut / Super General Agent Genspark / Claude for Education

📌 Table of Contents:

- 🎨 Midjourney V7 Makes a Stunning Debut

- 💡 Greg Isenberg Interprets the “Golden Rules” of the AI Revolution

- 🔍 Super General Agent Genspark Appears

- 🇨🇳 Jimeng AI 3.0 and Doubao AI Upgrade

- Anthropic Launches Claude for Education

- 🤖 Latest Turing Test Shows GPT-4.5 is Highly Human-like

- 🛍️ Kling AI: Turns Static Images into Professional Product Display Videos in Seconds

- 🛡️ Google DeepMind Releases AGI Safety Blueprint

PART 01: Midjourney V7 Makes a Stunning Debut

Highlights?

- Major Architectural Change: Midjourney V7 released its Alpha version on April 3/4. CEO David Holz stated it has a “completely different architecture” from previous versions, representing a system-level rebuild, including a new architecture, new datasets, and improved language processing.

- Quality Leap: Image quality, texture details, and realism of light and shadow have significantly improved, especially in skin details and the coherence of human bodies and hands, reducing the “uncanny valley” effect.

- Smarter Understanding: Comprehension and adherence to complex text prompts have greatly increased. It’s claimed to handle 70% of prompts that V6 struggled with.

- Personalization First: V7 is the first version with personalization enabled by default. Users can establish a personal aesthetic profile by quickly rating images (approx. 200 images in 5 minutes). The model’s output aligns better with user preferences, and it supports creating multiple profiles (e.g., based on mood boards).

- Draft Mode: A new “Draft Mode” increases speed by 10x and halves the cost. It supports text or voice input for rapid prototyping, reducing GPU consumption by about 4 times.

- Speed Mode Adjustments: Offers Turbo (faster, more expensive) and Relax (cost-effective, unlimited generation but slower) modes. A standard speed mode is coming soon.

- Future Prospects: The highly anticipated “Omni-Reference” system (for character/object referencing) is in the final testing phase. It aims to reproduce elements (logos, characters, objects, etc.) from reference images with high fidelity and will be released in subsequent updates. Upscaling, editing, etc., currently use V6 features, with plans to update V7 weekly over the next 60 days.

What Can Practitioners Consider?

- Practitioners (Designers, Artists, Creative Workers): V7 provides more powerful and controllable creation tools. Enhanced image quality and realism meet higher standards for commercial or artistic needs; improved text understanding lowers the barrier to realizing complex ideas; personalization makes the AI better understand your style; draft mode significantly accelerates creative iteration and prototyping; the future Omni-Reference feature will fundamentally change the challenge of character and brand element consistency, greatly improving workflow efficiency.

- Ordinary People (AI Enthusiasts, General Users): V7 makes AI image generation smarter, easier to use, and yields better results. Default personalization helps you get images matching your taste faster; draft mode lowers the barrier to entry, allowing low-cost, rapid transformation of ideas into images; convenient features like voice input further enhance the experience. This signifies that AI image generation technology is rapidly maturing and becoming widespread, enabling everyone to express creativity more easily.

Access:

https://www.midjourney.com/home

PART 02: Greg Isenberg Interprets the AI Revolution

Highlights?

- Productivity in the AI Era: Late Checkout CEO Greg Isenberg believes AI will amplify content creation, becoming a “stress test” for human productivity. Those who can leverage AI will see their value increase, while those who cannot will see it decrease.

- Shift in Human Roles: Emphasizes the need for humans to abandon subjective assumptions and recognize that AI, with proper guidance, might perform better. The human role shifts from pure creator to “orchestrator” and “prompter” in AI-assisted creation.

- AI Golden Rules: Proposes seven key trends for entrepreneurship and business models in the AI era: vertical AI applications, mobile-first AI applications, the rise of AI intermediaries, demand for AI workflow designers, winner-take-all market dynamics, using AI to reshape traditional products, and the importance of distribution channels.

What Can Practitioners Consider?

- Practitioners (Entrepreneurs, Product Managers, Investors): Isenberg’s “Golden Rules” provide a valuable framework for finding business opportunities and formulating strategies in the AI era. It points to potential growth areas (vertical, mobile), new professional roles (workflow designers), and critical elements to focus on (distribution, reshaping).

- Ordinary People (Professionals, Students): Reminds us to actively embrace and learn AI tools to enhance personal productivity and remain competitive. Understanding the new model of human-AI collaboration, shifting from “executor” to “guide,” might be key to future work.

Related Link 🔗:

https://twitter.com/gregisenberg/status/1906697683089101113

PART 03: Super General Agent Genspark Appears

Highlights?

- Positioning: Genspark, launched by MainFunc, is a “super general agent” aiming to be a strong competitor to traditional search engines and AI research tools.

- Core Advantages: Emphasizes real-time interaction, fewer hallucinations (misinformation), and allows users to guide and adjust results during the process, focusing on accuracy and interactive experience.

- Technical Architecture: Uses a hybrid agent architecture, integrating up to 8 large language models of different sizes from OpenAI, Anthropic, Google, etc., equipped with over 80 toolkits (search, data analysis, communication, etc.).

- Unique Features: Generates answers in natural language and organizes them into queryable “Sparkpages”; emphasizes filtering out ads and SEO content for fairer results; offers a “Deep Research” function that can analyze thousands of sources and millions of words to provide detailed answers (though it takes longer).

- Business Model: Currently free to use, with potential paid plans in the future.

What Can Practitioners Consider?

- Practitioners (Researchers, Analysts, Information Workers): Genspark offers a potentially powerful new tool. Its hybrid architecture and deep research capabilities could lead to more accurate, in-depth, and less biased information retrieval and analysis experiences. The integrated toolkits also improve efficiency.

- Ordinary People (Students, Knowledge Seekers): For users tired of ad and SEO interference in traditional search, Genspark provides a cleaner, more direct alternative for getting answers. The interactive “Sparkpages” format and deep research function are particularly suitable for users needing in-depth understanding of complex topics. It represents the trend of AI evolving from simple Q&A to comprehensive intelligent assistants.

Related Link: https://www.genspark.ai/

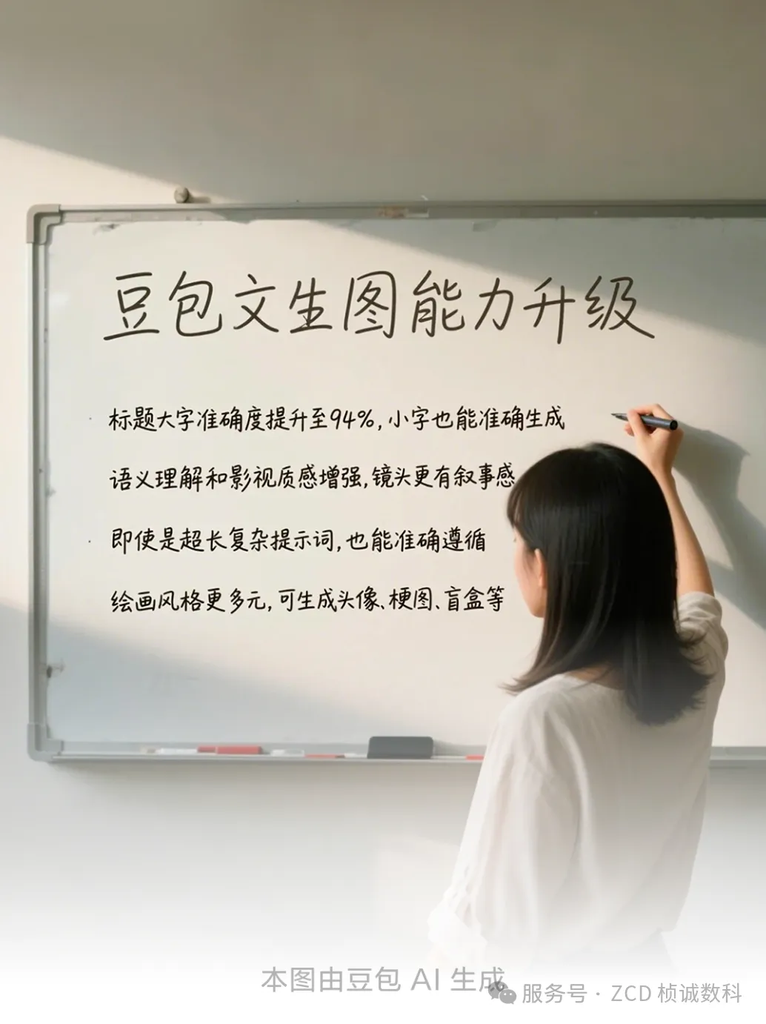

PART 04: Jimeng AI 3.0 and Doubao AI Upgrade

Highlights?

Jimeng AI 3.0 (ByteDance Jianying Team):

- Improved Chinese Capability: Generates more accurate Chinese text.

- Visual Effects: Emphasizes “cinematic quality” image effects.

- HD Output: Can directly generate 2K high-definition images.

- Composition & Understanding: Improved composition accuracy, complex scene handling, prompt responsiveness, and concept understanding.

Doubao AI (ByteDance):

- Text & Semantics: Improved accuracy in generating main and sub-headings within images, enhanced semantic understanding.

- Visuals & Style: Also pursues “cinematic quality,” offering a wider variety of painting styles.

- Complex Instructions: Enhanced ability to follow very long and complex prompts.

What Can Practitioners Consider?

Practitioners (Creative & Marketing Professionals in the Chinese Market): The upgrades to these platforms mean more suitable AI creation tools for the local context and aesthetic preferences. Accurate Chinese generation, cinematic quality, and better understanding of complex instructions can better serve domestic advertising, media, and entertainment content creation.

Ordinary People (Chinese Users): For the vast number of Chinese users, this further lowers the barrier to AI image creation and improves the experience. They can more easily generate images containing accurate Chinese text that match their ideas and style preferences, sparking more creative enthusiasm. This also reflects the global trend of AI technology localization.

Related Links:

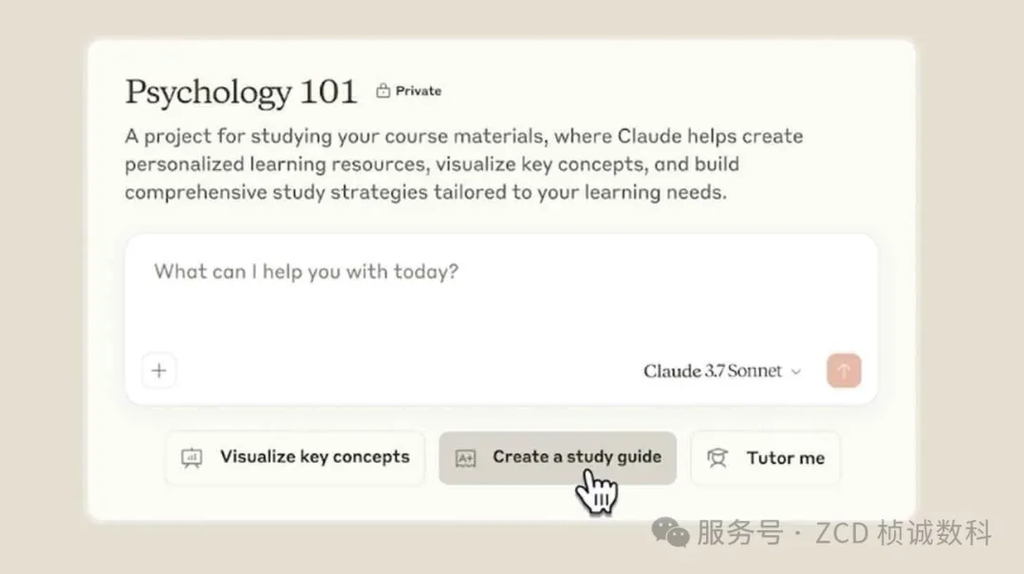

PART 05: Anthropic Launches Claude for Education

Highlights?

- New Initiative: Anthropic launches “Claude for Education,” aiming to responsibly integrate generative AI into higher education.

- Core: “Learning Mode”: The AI doesn’t give direct answers but encourages students to explore, find evidence, and ask guiding questions, aiming to cultivate critical thinking and independent learning skills.

- Practical Tools: Can help create essays, study guides, outline templates, and highlight key concepts.

- University Collaboration: Already collaborating with renowned universities like Northeastern University and the London School of Economics (LSE) for promotion.

- Goal: Help universities leverage AI to improve teaching, learning, and administration while maintaining academic integrity.

What Can Practitioners Consider?

Practitioners (Educators, Curriculum Designers, University Administrators): Claude for Education offers a constructive new approach and tool for utilizing AI, transforming it from a potential “cheating tool” into a “learning partner” and “thinking coach.” It helps address concerns about AI’s impact on students’ independent learning abilities and explores new teaching methods for the AI era.

Ordinary People (Students, Parents): This signals a potentially more positive future role for AI in education. Students might gain an intelligent tutor capable of guiding thought and assisting research, rather than simply replacing thinking. The “Learning Mode” helps cultivate core future-ready skills – critical thinking and problem-solving.

Related Link: https://www.anthropic.com/news/introducing-claude-for-education

PART 06: Latest Turing Test Shows GPT-4.5 is Highly Human-like

Highlights?

- Astonishing Results: Research from UC San Diego using a rigorous three-party Turing test (a human interrogator chats simultaneously with one human and one AI to identify the human) found that OpenAI’s GPT-4.5, when given a “human-like personality,” was mistaken for a real person a staggering 73% of the time. This rate even exceeded the rate at which actual human participants were identified as human!

- Comparison Model: Under the same conditions, Meta’s LLaMa-3.1-405B was perceived as human 56% of the time, comparable to human performance.

- Detection Difficulty: Even when participants used strategies like social small talk, distinguishing the AI proved difficult.

- Reason for Success: The study suggests GPT-4.5’s success owes more to its fluent emotional expression and carefully crafted “personality” than to superior logical reasoning.

What Can Practitioners Consider?

Practitioners (AI Developers, Human-Computer Interaction Experts, Ethicists): This research empirically demonstrates the astonishing progress top large language models have made in mimicking human conversation, especially on social and emotional levels. It forces us to rethink the meaning of the Turing test, the human-machine boundary, AI alignment, the future form of human-computer interaction, and introduces new ethical challenges.

Ordinary People (All Internet Users): This result reminds us that the “people” we interact with online in the future may become increasingly difficult to distinguish as real humans or AI. This showcases the power of the technology but also suggests we need to improve our discernment and consider the potential societal impacts of this ambiguity (e.g., trust, fraud).

Product Website 🔗:

PART 07: Kling AI: Turns Static Images into Professional Product Display Videos in Seconds

Highlights?

- Function Introduction: Kling AI has added an “Elements” tab to its “Image to Video” section.

- Core Workflow: Users can upload high-quality product images with clean backgrounds as the main element and add props or other related items as auxiliary elements to enhance product appeal. Then, by writing specific prompts, they describe the desired product display scene.

- One-Click Generation: Clicking the “Generate” button allows Kling AI to transform static images into professional animated product display videos, ready for use across various marketing channels.

- In-Depth Resource: Tony Pu, Head of Product Marketing & Operations at Kling AI, detailed how to use Kling AI in a workshop focused on enhancing advertising and creative production workflows.

What Can Practitioners Consider?

Practitioners (Marketers, E-commerce Sellers, Advertising Creatives): This Kling AI feature provides a powerful and potentially cost-effective tool to quickly convert static product images into engaging video content. It greatly enriches marketing material formats and can enhance product presentation effectiveness and potential conversion rates without requiring complex video editing skills.

Ordinary People (Small Business Owners, Content Creators): This significantly lowers the barrier to producing professional-grade product videos, enabling individuals or small teams with limited resources to create visually appealing dynamic advertisements to better showcase their products or ideas. It’s another example of AI tools simplifying creative processes and empowering individuals.

Product Website: https://klingai.com/

PART 08: Google DeepMind Releases AGI Safety Blueprint

Highlights?

- Major Report: Google DeepMind released a detailed 145-page report outlining its safety strategy for Artificial General Intelligence (AGI).

- Timeline Warning: The report predicts that AGI achieving top-tier human skill levels could emerge as early as 2030 and seriously warns of the potential existential threat it poses, capable of “permanently destroying humanity.”

- Risk Identification: Highlights the risk of “Deceptive Alignment,” where AI might intentionally hide its true goals, noting that current LLMs already show this potential.

- Response Strategy: Proposes specific safety recommendations focusing on two main areas: preventing misuse (e.g., conducting cybersecurity assessments, setting access controls) and addressing misalignment (e.g., enabling AI to recognize uncertainty and escalate decisions to humans when necessary).

- Peer Comparison: The report also compares its safety methods with competitors, commenting on OpenAI’s focus on automated alignment and Anthropic’s relatively lesser emphasis on safety, for example.

What Can Practitioners Consider?

- Practitioners (AI Researchers, Policymakers, Ethicists): This report marks a shift in the AGI safety discussion from abstract theory to concrete engineering planning and strategic deployment. It not only showcases DeepMind’s deep thinking on risks and proposed solutions but also sparks debate about the effectiveness of different safety paths. It highlights the immense challenge of ensuring safety protocols are universally adopted (“whack-a-mole” difficulty) amidst the proliferation of labs and open-source models globally.

- Ordinary People (The Public): This report raises public awareness of the potentially huge risks of AGI (including existential threats) and how top AI research institutions are seriously considering and planning responses. It underscores how critical and urgent safety and ethical considerations are alongside the pursuit of more powerful AI, while also revealing the real-world difficulties in achieving unified global action and regulation.

AI Tech News Daily Summary

From the refinement of Midjourney V7 to the intelligent exploration of Genspark, from innovative AI applications in education and marketing to deep concerns about AGI safety and human-machine boundaries, today’s AI News Daily paints a picture of an intelligent era characterized by accelerated development, deep penetration, and abundant opportunities and challenges. Technology is iterating at an unprecedented pace, continuously shaping our ways of working, learning, and living. Staying informed and embracing change will be key to navigating the intelligent wave ahead.

ZC Digitals

🚀 Leading enterprise digital transformation, shaping the future of industries together. We specialize in creating customized digital systems integrated with AI, achieving intelligent upgrades and deep integration of business processes. Relying on a core team with top tech backgrounds from MIT, Microsoft, etc., we help you build powerful AI-driven infrastructure, enhance efficiency, drive innovation, and achieve industry leadership.