📌 Table of Contents:

- 🏆 Alibaba’s Qwen2.5-Omni Tops Global Multimodal Leaderboard

- 🚀 Microsoft CEO Reveals Future Vision of “Doubling Every Three Months”

- 👓 Meta’s First AR Glasses with Screen, Hypernova, Poised for Launch

- 🤖 ZHIYUAN Robot Partners with Pi to Explore New Frontiers in Embodied AI

- 🎬 Luma AI Enables Precise Cinematic Camera Control with Natural Language

- 💻 Augment Agent: Your Next-Generation AI Coding Partner

- 🧠 NotebookLM Introduces AI-Powered Interactive Mind Maps 🗺️

- ❤️ Tinder Launches Voice Dating Game to Practice Flirting Skills

PART 01: Qwen2.5-Omni Tops Global Multimodal Leaderboard

What’s the highlight?

Alibaba’s Tongyi Qianwen team released Qwen2.5-Omni, a lightweight multimodal large model with only 7 billion parameters. Despite its small “size,” it packs a powerful punch, successfully topping the Hugging Face Global Trends list, surpassing many models with larger parameters. Its core innovation lies in its powerful “cross-domain” understanding ability, capable of processing text, image, audio, and video information simultaneously, and supporting real-time audio-video conversations and smooth, natural speech generation. Its unique Thinker-Talker architecture and TMRoPE technology allow it to perform exceptionally well on various multimodal tasks (OmniBench), even rivaling pure text input in voice command understanding.

What can practitioners consider?

- Practitioners: This proves that model size isn’t the sole determinant; clever architecture and technological innovation can also yield outstanding performance, offering new ideas for teams with limited resources. The open-source model will accelerate the development and application deployment of the entire multimodal AI ecosystem.

- General Public: We will experience smarter, more natural AI applications in the future. Imagine your smart assistant not only understanding your words but also seeing pictures and videos, interacting with you in real-time voice, or bringing more immersive audio-visual entertainment experiences. Smaller models also mean AI is easier to integrate into everyday devices like phones and speakers.

Access Method:

https://huggingface.co/spaces/Qwen/Qwen2.5-Omni-7B-Demo

PART 02: Future Vision of “Doubling Every Three Months”

What’s the highlight?

Microsoft CEO Satya Nadella pointed out that thanks to the “Scaling Law” and advancements in computing and algorithms, AI capabilities are improving at an astonishing rate, roughly doubling every three months, far exceeding Moore’s Law. He foresees AI bringing three fundamental breakthroughs: first, more natural multimodal interaction interfaces (conversing like humans); second, powerful planning, reasoning, and logical thinking abilities; third, the ability for deep thinking based on long-term memory and background knowledge. These three capabilities will reshape the entire technology stack.

What can practitioners consider?

- Practitioners: Nadella’s view points the way: invest heavily in multimodal interaction research; develop AI applications capable of handling complex planning and decision-making; build personalized AI services with long-term memory and deep understanding capabilities.

- General Public: AI is becoming increasingly “smart” and “understanding.” In the future, communicating naturally and smoothly with AI assistants, having AI help with complex planning (like travel itineraries), and enjoying highly personalized services (like news recommendations, health management) will become possible, making life more convenient and intelligent.

PART 03: Meta’s First AR Glasses with Screen, Hypernova

What’s the highlight?

Meta plans to launch its first AR glasses with a screen, codenamed “Hypernova,” by the end of this year. Unlike the previous Ray-Ban Meta, it will integrate a display in the lower right corner of the right lens, cleverly designed to avoid the awkward “eye-rolling” effect in social situations. These glasses, expected to cost over $1000, will run a custom Android system, likely relying initially on a phone app. Interaction methods will include frame touch controls and innovative “neural wristband” gesture control. Meta is also developing more advanced models concurrently.

What can practitioners consider?

- Practitioners: This is a significant step towards mainstream consumer AR, providing developers with a new platform and imaginative space to explore AR applications in entertainment, office work, education, etc. The single-eye display, custom system, and neural wristband design also offer new technical directions for the industry.

- General Public: If the experience is good, AR glasses could become the next generation personal computing device after smartphones, bringing entirely new ways of accessing information and interacting (e.g., navigation, information prompts displayed directly). However, the high price and potential discomfort of a single-eye display are early challenges.

Related Link: https://www.meta.com/

PART 04: ZHIYUAN Robot Partners with Pi to Explore Embodied AI

What’s the highlight?

Chinese robotics startup ZHIYUAN Robot announced a deep technical collaboration with the American embodied AI company Physical Intelligence (Pi) to jointly develop embodied intelligent robots capable of performing long-term complex tasks in dynamic environments. Concurrently, they brought in world-leading robotics expert Dr. Jianlan Luo (former Google X/DeepMind research scientist) to lead the research center. The collaboration has already yielded initial results, demonstrating the ability of general-purpose models to drive robots to perform various tasks.

What can practitioners consider?

- Practitioners: This reflects the global collaboration trend in embodied intelligence, accelerating technological breakthroughs through complementary strengths. The addition of top talent will significantly enhance R&D capabilities, potentially leading to key advancements in this frontier field.

- General Public: The goal of embodied intelligence is to enable robots to better understand the physical world and collaborate with humans. In the future, more dexterous and capable robots could enter homes, hospitals, and factories, taking on tasks like housework, nursing, and precision manufacturing, improving our quality of life and productivity.

Related Link: https://www.zhiyuan-robot.com/

PART 05: Luma AI Enables Precise Cinematic Camera Control with Natural Language

What’s the highlight?

Luma Labs introduced a new “Camera Motion Concepts” feature for its AI video generation model Ray2. Users can now precisely control over 20 preset cinematic camera movements using simple natural language (e.g., “slow push in from a low angle”). This innovation uses a new method called “Concepts,” teaching the model new skills with just a few samples, even allowing for combinations of unique or physically impossible camera movements while maintaining high video quality and style.

What can practitioners consider?

- Practitioners: This significantly lowers the barrier to controlling camera movements in professional video production, providing content creators and marketers with a powerful and convenient tool to easily achieve a “blockbuster feel,” greatly expanding creative boundaries.

- General Public: We will see more visually stunning video content generated by AI in the future. Whether it’s short entertainment clips, instructional videos, or social sharing, achieving more professional and engaging visual presentations will become easier. AI video creation is becoming unprecedentedly simple and fun.

Related Link: https://lumalabs.ai/

PART 06: Augment Agent: AI Coding Partner

What’s the highlight?

Augment Code launched Augment Agent, a powerful AI coding assistant for professional developers. It goes beyond code completion, handling end-to-end development tasks like adding new features across multiple files, automatically running tests, creating project management tickets (Linear), and initiating pull requests. It outperforms competitors like GitHub Copilot in authoritative tests (SWE-bench-verified), with its core strengths being deep understanding of codebase context, adaptive learning capabilities, and support for multimodal inputs like screenshots and Figma designs.

What can practitioners consider?

Practitioners: Tools like this can significantly boost development efficiency by automating many repetitive coding and process tasks, allowing engineers to focus more on core logic and innovation, thus shortening development cycles and improving software quality.

General Public: While we don’t use these tools directly, their proliferation means faster software development and more frequent iterations. Ultimately, we will enjoy more powerful, stable, and innovative applications and services, enhancing our digital life experience.

Product Website 🔗:

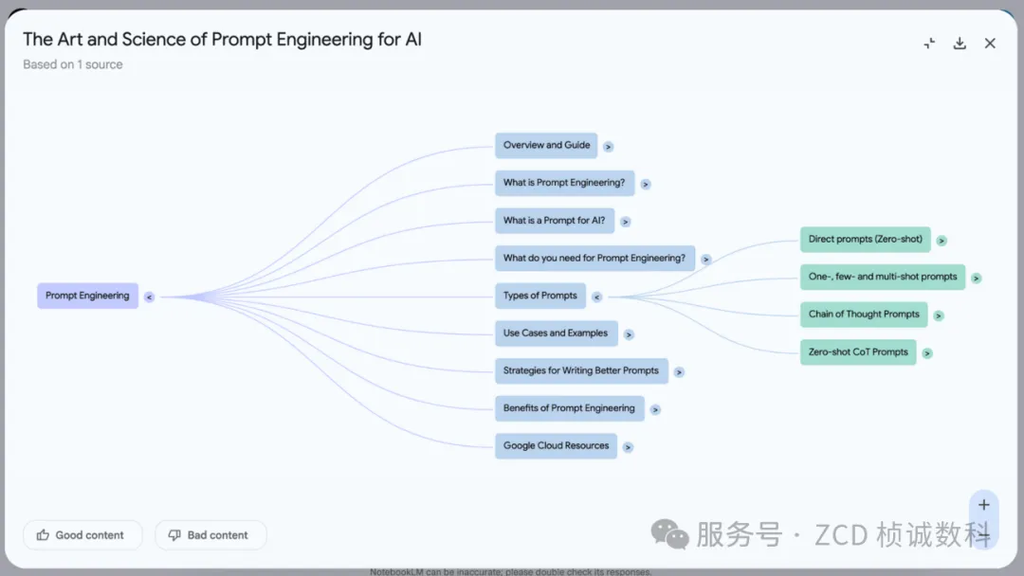

PART 07: AI-Powered Interactive Mind Maps

What’s the highlight?

Google’s NotebookLM (a note-taking and research tool) added a mind mapping feature. After users upload documents, the AI (based on the Gemini model) automatically analyzes the text, identifies key concepts and their relationships, and generates an interactive visual knowledge network. Users can click nodes to ask questions, jump between different concepts to explore, intuitively discover hidden connections, and each node links back to the original source text.

What can practitioners consider?

Practitioners: For professionals dealing with large amounts of information (like researchers, analysts), this offers a completely new way to manage and explore knowledge, allowing for a more intuitive and efficient understanding of complex topics and sparking new insights.

General Public: Makes learning and understanding complex knowledge easier and more engaging. By organizing thoughts with a visual map, it’s easier to grasp the knowledge structure, discover connections between concepts, thereby improving learning efficiency and memory retention.

Product Website: https://notebooklm.google.com/

PART 08: Tinder Launches Voice Dating Game to Practice Flirting Skills

What’s the highlight?

Dating app Tinder launched a new feature called “The Game Game,” a voice conversation experience powered by OpenAI technology (like GPT-4o). Users can interact vocally with realistic AI virtual characters to practice and test their flirting and communication skills. The AI provides real-time feedback and ratings based on the user’s performance (charm, engagement, etc.). To encourage real social interaction, the feature is limited to 5 plays per day.

What can practitioners consider?

- Practitioners: Demonstrates the innovative potential of AI in social applications and soft skills training, using real-time voice interaction and advanced language models to create immersive practice environments.

- General Public: For those wanting to improve social or communication skills and build confidence, this provides a safe and fun “practice ground.” Through simulated interaction with AI and instant feedback, users can understand their communication style and learn how to improve. However, it’s important to note this is supplementary; ultimately, one must engage in real social interactions and avoid over-reliance.

Related Link: https://www.tinderpressroom.com/

AI Tech News Daily Summary

From underlying models to top-level applications, the wave of artificial intelligence innovation is impacting the world with unprecedented breadth and depth. Lightweight, multimodal, highly interactive, and intelligent are clear major trends. Embracing these changes and understanding their value allows both industry experts and the general public to better seize opportunities in the coming intelligent era. The future is here; let’s wait and see how AI will continue to shape an even more exciting technological landscape!

ZC Digitals

🚀 Leading enterprise digital transformation, shaping the future of industries together. We specialize in creating customized digital systems integrated with AI, achieving intelligent upgrades and deep integration of business processes. Relying on a core team with top tech backgrounds from MIT, Microsoft, etc., we help you build powerful AI-driven infrastructure, enhance efficiency, drive innovation, and achieve industry leadership.